Run This Audit Before You Change Your Goal

September 2025. Beijing.

Our China office was inside a glass tower. Across the street: Microsoft’s giant logo.

That week, our team was building a side project.

The pitch was clean:

Small and mid-sized data owners store basic metadata in Supabase.

Our app classifies the dataset (domain fit, market size), then auto-generates a “ChatGPT-native app.”

Later, a ChatGPT user pays and @ the app to get sharper answers.

Ambitious, but not insane.

The real pain lived in one module:

Letting data owners edit existing data through conversation.

Sounds friendly. In reality: edge cases, permissions, schema constraints, “natural language” turning into a systems problem.

Weeks passed. We got stuck in a loop:

Set goal → fail → try and fail again → redefine goal → fail again.

One afternoon, the team sat in silence. Beijing sunlight was pouring in, but the room felt cold anyway.

Then the familiar chorus started:

“Maybe I’m not capable.”

“Should we just change the goal to something more realistic?”

“We didn’t work hard enough, may be more hours (i have already hired this dude, and will explain later)”

“We don’t have enough people. Not enough resources.”

This isn’t a “my team” problem.

This is what most teams do after consecutive failures:

They turn failure into a moral verdict.

They treat goals like vows. If they miss, they write a confession.

They add more effort, suffer harder, punish themselves… or swap in a prettier goal to protect their ego.

But here’s what I believe now:

The purpose of a goal is not achievement.

The purpose of a goal is match-testing.

A goal exists to test whether the target, constraints, and toolchain actually MATCH.

When failure happens, you’re not supposed to write an apology.

You’re supposed to run an audit.

You Don’t Reach New York Because You “Not Hard-working, or Lack Attitude”

I keep one brutal metaphor in my head:

“24 hours from Beijing to New York.”

One person walks.

Another rides a bike.

Another takes a boat.

Another flies.

If you’re biking for three hours and you’re still not out of Beijing, what’s the correct first reaction?

Not: “My attitude isn’t pure enough.”

Not: “I should try harder and pedal faster.”

The correct first reaction is:

Is my vehicle capable of crossing this constraint (or match the goal)?

Most failed goals were dead on arrival.

Not because you didn’t suffer enough.

Because you chose the wrong tools, methods.

And the most dangerous mistake is this:

When you blame failure on effort and attitude first, you lose your ability to diagnose.

1 — Why People Blame Effort First (and Why That Makes You Lose Twice)

I’ve worked with teams all over the world. Humans are the same. We share the same stupidity.

When a project starts failing, I hear the same three “easy explanations” on repeat.

1) Effort is the laziest explanation

“Not enough effort” is always plausible.

Because it doesn’t force you to admit upstream errors:

your goal definition is sloppy

your constraints make success impossible

your causal model is fantasy

You don’t have to think. You just throw the easy shit: “We’ll grind.”

2) Effort protects ego

“If I just push harder, I can do it” is an ego narrative.

It sounds heroic.

It’s actually a trap.

It keeps you loyal to a broken plan because admitting the plan is wrong hurts more than working late.

3) Lacking Resources is easy way to outsource responsibility

This is the corporate version of prayer:

“If we had more people / more budget / more time, we’d win.”

Sometimes it’s true. Often it’s an excuse.

Because it lets you avoid the hardest question:

Under current constraints, what’s the smallest experiment that proves our method works?

People who always need more resources usually have one thing in common:

They can’t validate a path. They can only demand a bigger engine.

2 — After Failure, You Don’t Need Motivation. You Need Order.

Failure doesn’t require “inspiration.”

It requires discipline.

Not discipline as suffering.

Discipline as diagnostic order.

Debugging always has a sequence.

Upstream determines downstream.

When teams get the order wrong, four predictable mistakes appear:

You optimize tools on a wrong goal definition

You hunt methods under impossible constraints

You argue execution with no metrics

Your causal model is wrong, so you double effort and sink faster

So the real issue isn’t “lack of methods.”

It’s missing an audit order.

If you remember one thing from this piece, remember this:

When the goal fails, run the audit from top to bottom.

3 — The Audit Ladder: Run This Before You Change Your Goal

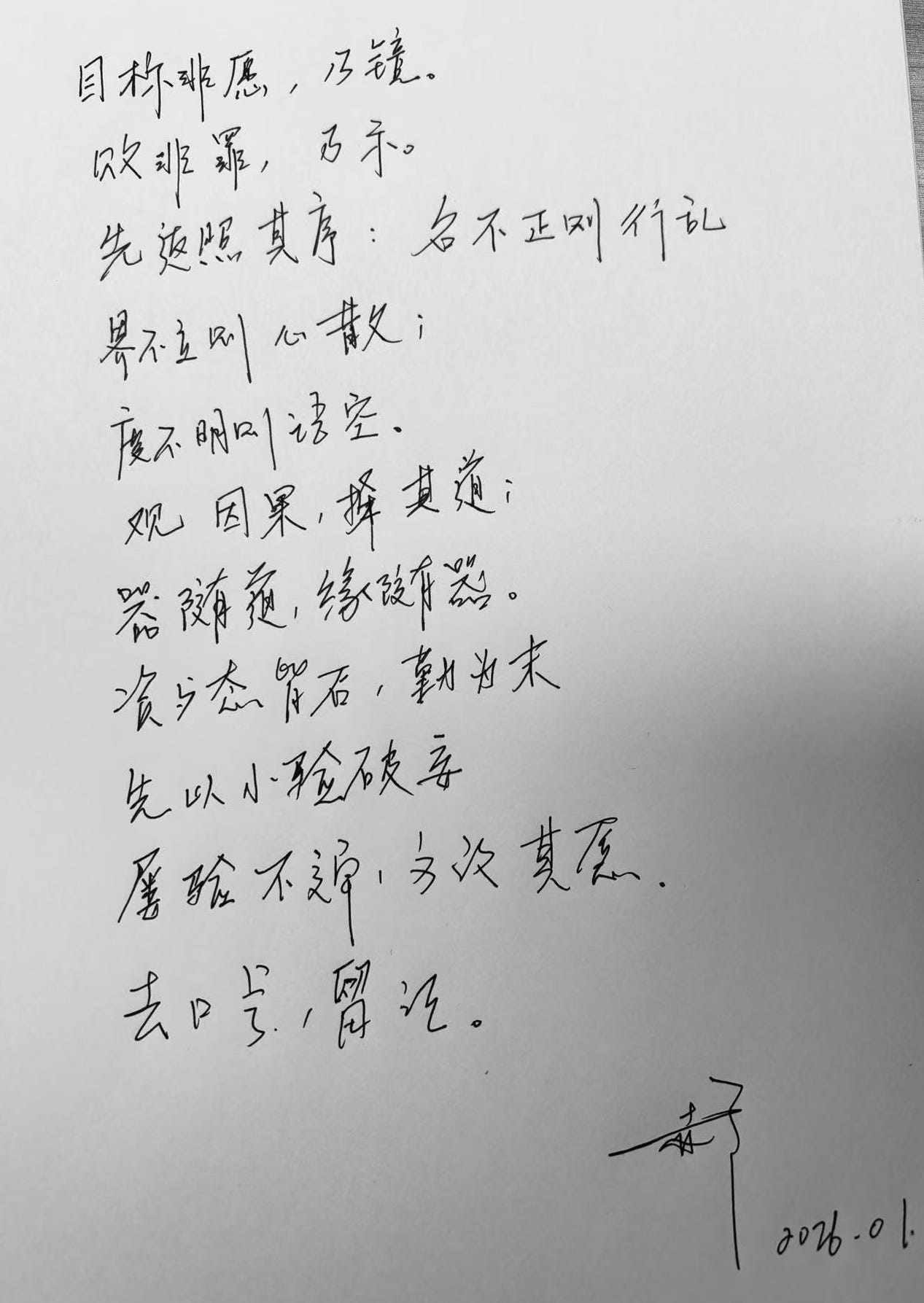

Screenshot this. Print it. Put it on the wall.

Step 1 — Definition / Constraints / Measurement

Definition

What is the goal in one sentence: who does what to achieve what result by when?

What does success look like in a verifiable form? (number / state / deliverable standard)

Constraints

What are the real limits? time window, cost ceiling, red lines, must-have conditions

Do these constraints make certain paths impossible? (the “bike to New York” problem)

Measurement

What metric tells you you’re moving? Where does it come from? Who logs it?

What’s the leading indicator? What’s the review cadence? (daily / weekly / milestone)

If Step 1 is unclear, everything after is fake progress.

Step 2 — Tools (Software tools + thinking tools)

Where is the biggest resistance: info, production, communication, execution, tracking, review?

Is there a tool that directly reduces resistance?

Are we using tools to amplify output—or to manufacture complexity?

Tool value has one job: reduce resistance at the bottleneck.

Tools don’t replace clarity. Tools don’t replace evidence.

Step 3 — Method / Process

What is the repeatable weekly process? (not vibes, not “we’ll push”)

What is “done” for each step?

Where exactly does it stall—missing process, or process not followed?

No process, no compounding.

Step 4 — Resources

What’s the real constraint: money, people, time, info, distribution, permissions?

Under current resources, what’s the smallest validating experiment you can run?

Is “lack of resources” truly first-order—or did you refuse to cut scope to MVP?

Resources are always insufficient.

The usual failure is refusing to shrink the problem to a testable slice.

Step 5 — State / Attitude

What’s the personal failure mode: fear of rejection, perfectionism, uncertainty avoidance?

What intervention works: sleep, boundaries, focus blocks, emotional tools?

Are we using “attitude talk” to hide engineering problems?

Attitude matters. It’s just late in the chain.

Put it first and you’re using morality to cover systems failure.

Step 6 — Effort

Only after the upstream is correct, ask:

Is effort sufficient, consistent, sustained?

If we add effort, where exactly? How much? What metric proves it worked?

Effort is not the steering wheel. Effort is the gas pedal.

The steering wheel is Step 1–3.

4 — When to Change the Toolchain vs When to Change the Goal

The obvious pushback is:

“What if the goal is literally impossible?”

Good. That’s why the rule must be ruthless.

Decision Rule: Disprove method before you touch the goal

Run the audit ladder

Select the single biggest bottleneck (only one)

Design a 72-hour experiment (small, measurable, undeniable)

Repeat 2–3 cycles

Only if the evidence shows:

the bottleneck can’t be fixed under current constraints

or

fixing it costs wildly more than it’s worth

…then you change the goal / constraints / timeline.

This rule matters because it blocks ego-driven pivots.

You don’t change goals to feel better.

You change goals because the evidence forced you.

5 — Failure Is a Team Filter

I don’t evaluate teams the same way anymore.

I watch how they speak after failure, coz failure is for filtering.

After failure, discussion must follow this order:

Goal definition → constraints → metrics → causal assumptions → strategy/method → tools → resources → skills/state → effort.

Anyone who drags “effort/attitude/resources” to the top is contaminating the team (sorry to say this, but it’s real).

Three archetypes get removed fast, that’s it.

Cut #1: “Just work harder” people

What they’re doing

They trade overtime for moral superiority: “I’m grinding, so I can’t be wrong.”

The moment the team returns to suffering narratives, causal reasoning dies.

Their phrases

“We just need to push harder.”

“We didn’t put enough resources.”

“Give me more time, I can make it work.”

“Just ship it first—don’t overthink definition.”

“Stop thinking, just do.”

One-line test

Ask:

“When you say ‘work harder,’ which upstream assumption are you changing—and what metric proves it?”

If they can’t answer with assumptions/metrics/process changes, and can only offer more hours, they’re a system breaker.

Cut #2: “My attitude isn’t good” people

These are sneaky because they sound humble.

But they turn failure into therapy, and the team stops examining causality.

Their phrases

“I’ve been mentally tired and it shows.”

“My energy’s been low and I’m slipping.”

“I start strong, then I stop when it gets boring.”

“I’m not sure I want this badly enough.”

Test

Ask:

“Which variable will you change next cycle—and how will we verify it?”

If they keep circling feelings and identity with no measurable action, they turn postmortems into comfort sessions.

Cut #3: “We don’t have resources” people, who outsourcing failure to the universe

Resources matter. Of course.

But some people use “resources” as a permanent immunity badge:

“If we had enough, we’d win.”

The hidden cost: they never have to prove the method.

Their phrases

“Not enough people / money / time.”

“Once we hire / once we raise / once the market improves…”

“Without resources there’s no point discussing method.”

Test

Ask:

“Under current constraints, what is the smallest validating experiment?”

If they can’t propose an MVP test and only demand more resources, they’re not solving problems. They’re delaying accountability.

The People You Keep: System Builders

The keepers have a different language.

They don’t moralize. They instrument.

Their phrases

“Define success: what’s the metric?”

“What are the constraints, explicitly?”

“We think A drives B—where’s the evidence?”

“Stop debating. Run a 72-hour test.”

“Where’s the bottleneck? Can a tool reduce resistance?”

“We don’t need more effort. We need a higher hit-rate path.”

They do three things consistently:

They make the problem legible

They collapse debate into experiments

They obsess over upstream variables

That’s not “personality.”

That’s how you build a team that compounds.

Closing: The One-Page Audit Checklist (Do This Today)

If your goal just failed, do not “reflect.”

Do this.

The 15-minute Audit

Write the goal in one sentence (who / what / result / deadline)

List the constraints (time, cost, red lines)

Write the success metric + leading metric

Identify the single biggest bottleneck

Define a 72-hour experiment to attack it

Assign an owner + daily check-in metric